Table of Contents

- Why Good Enough Code is Killing Your Core Web Vitals

- Stop Serving Outdated Media Formats to Modern Browsers

- Caching Strategies That Go Beyond Basic Plugins

- How to Turn Your Content Delivery Network Into a Weapon

- Fixing the Backend Because Cheap Hosting Ruins Good SEO

- Playing Traffic Cop With Resource Prioritization Hints

- Mobile-First Speed Hacks for the Smartphone Era

- Stop Guessing and Start Auditing Like an SEO Professional

- Frequently Asked Questions About Advanced Site Speed

Key Takeaways

- Basic caching plugins are no longer sufficient to pass Google’s strict Core Web Vitals thresholds.

- Server-side optimizations, edge computing, and protocol upgrades like HTTP/3 are mandatory for competitive search rankings.

- Modern image formats and aggressive lazy loading must be strategically balanced to prevent layout shifts and prioritize critical rendering paths.

- Auditing requires real user field data rather than relying solely on isolated lab simulations to measure true business impact.

Let us address the elephant in the digital room: installing a free WordPress caching plugin, clicking “optimize,” and praying to the Google gods is not a legitimate site speed strategy. If your idea of technical SEO is slapping a digital band-aid on bloated server infrastructure, you are bleeding conversions and silently slipping down the search engine results pages. The myth that “good enough” site speed will keep you competitive is a dangerous lie perpetuated by lazy developers and budget-bin hosting companies. We are no longer living in an era where loading a webpage in under three seconds deserves a gold star. Today, speed is a brutal, binary metric. You are either passing Core Web Vitals, or you are handing your hard-earned revenue directly to your competitors.

Slow site speed operates like a silent tax on your entire digital footprint. It kills your conversion rates before a user even sees your hero image, inflates your bounce rates to catastrophic levels, and sends a clear signal to search engines that your user experience is fundamentally broken. If you want to dominate modern search landscapes, we have to move past beginner tactics and delve into deep, technical SEO territory. This requires a ruthless teardown of how your server communicates, how your code is parsed, and how your assets are delivered to the end-user.

Why Good Enough Code is Killing Your Core Web Vitals

Minify CSS, JavaScript, and HTML

The harsh reality of modern web development is that developers write code for humans to read, complete with helpful line breaks, spaces, and extensive comments. However, browsers do not care about your beautifully indented markup; they care about parsing speed. Every single unnecessary character in your HTML, CSS, and JavaScript files forces the browser’s parser to work harder, delaying the construction of the Document Object Model (DOM) and the CSS Object Model (CSSOM). Minification strips this code bloat, aggressively removing whitespace, formatting, and comments to drastically reduce file sizes. By mathematically condensing variable names and eliminating dead code, you significantly improve browser execution times, turning a sluggish, bloated script into a lean set of instructions that the browser can execute almost instantaneously.

Eliminate Render-Blocking Resources

If you have ever stared at a blank white screen waiting for a website to load, you have been a victim of render-blocking resources. Browsers are inherently cautious; when they encounter a script or stylesheet in the header of your document, they halt the entire rendering process to download and execute that file. This means your beautifully designed hero section cannot paint to the screen until some obscure, third-party analytics script finishes loading. To ruthlessly optimize your site, you must fix the technical SEO issues secretly sabotaging your site speed by aggressively deferring non-critical scripts. By pushing asynchronous loading attributes onto these files, you instruct the browser to continue building the visual page elements first, fundamentally preventing bloated JavaScript from bottlenecking your Largest Contentful Paint (LCP) score.

Upgrade to HTTP/3 Protocols

Most websites are still communicating using legacy protocols that rely on outdated TCP handshakes, causing unnecessary latency before a single byte of data is even transferred. Upgrading to modern HTTP/3 protocols fundamentally changes how your server talks to a user’s browser. Built on the QUIC transport layer (a protocol initially developed by Google), HTTP/3 replaces traditional TCP with UDP, dramatically reducing connection establishment times and eliminating the infamous “head-of-line blocking” issue where a single lost packet halts the entire data stream. By adopting this next-generation protocol, you stop bottlenecking your own server requests, drastically reduce latency for mobile users on unstable networks, and ensure your site data flows with ruthless efficiency. You can read the official specifications on the HTTP/3 Wikipedia page to understand the granular mechanics of this vital upgrade.

Stop Serving Outdated Media Formats to Modern Browsers

Force Next-Gen Image Formats

Clinging to JPEGs and PNGs in the modern era of web performance is akin to using dial-up internet; it works, but it is painfully inefficient. Next-generation image formats like WebP and AVIF offer vastly superior compression and quality characteristics compared to their older counterparts. WebP typically achieves a 30% smaller file size than JPEG without any perceptible loss in visual fidelity, while AVIF pushes those boundaries even further. To optimize at an advanced level, you must automatically serve these formats using server-side compression and content negotiation. When a browser requests an image, the server dynamically detects if the browser supports AVIF or WebP and serves the most lightweight version available, thereby slashing media payload sizes and preserving vital bandwidth for executing critical JavaScript.

Deploy Aggressive Lazy Loading

Loading every single image and video on a long-form page the exact moment a user arrives is a monumental waste of server resources and user data. Advanced lazy loading must be implemented aggressively for heavy videos and below-the-fold images to prioritize initial rendering. However, basic lazy loading is not enough; you must use Intersection Observers to trigger downloads precisely before an element scrolls into the viewport. This ensures the asset is ready by the time the user’s eyes reach it, without competing for bandwidth during the initial page load. Furthermore, you must never lazy load critical, above-the-fold assets, as this will artificially delay your LCP metric and result in a severe Core Web Vitals penalty.

Utilize Responsive Image Attributes

Serving a massive, 4K desktop banner image to a user browsing on a four-inch mobile screen is an amateur mistake that actively harms your search rankings. Utilizing responsive image attributes, specifically the `srcset` and `sizes` attributes, allows you to stop wasting bandwidth. These attributes act as a menu of options, providing the browser with a list of different image sizes and allowing the device’s internal logic to download only the version perfectly scaled for its current viewport and pixel density. By offering tailored image resolutions, you ensure mobile users receive significantly smaller payloads, dramatically accelerating render times for cellular connections.

Caching Strategies That Go Beyond Basic Plugins

Set Strict Browser Caching Policies

Basic caching often relies on generic time-to-live (TTL) settings that expire far too quickly. Advanced SEO optimization demands strict, aggressive browser caching policies via `Cache-Control` headers. By forcing local asset storage on user devices for static elements like logos, fonts, and core stylesheets, you mandate that the browser stores these files locally for up to a year. When a user navigates to a second page on your site, or returns a week later, the browser bypasses the network entirely for these assets, fetching them from the local hard drive. This turns repeat visits into almost instantaneous experiences, profoundly lowering the aggregate load times that search engines measure.

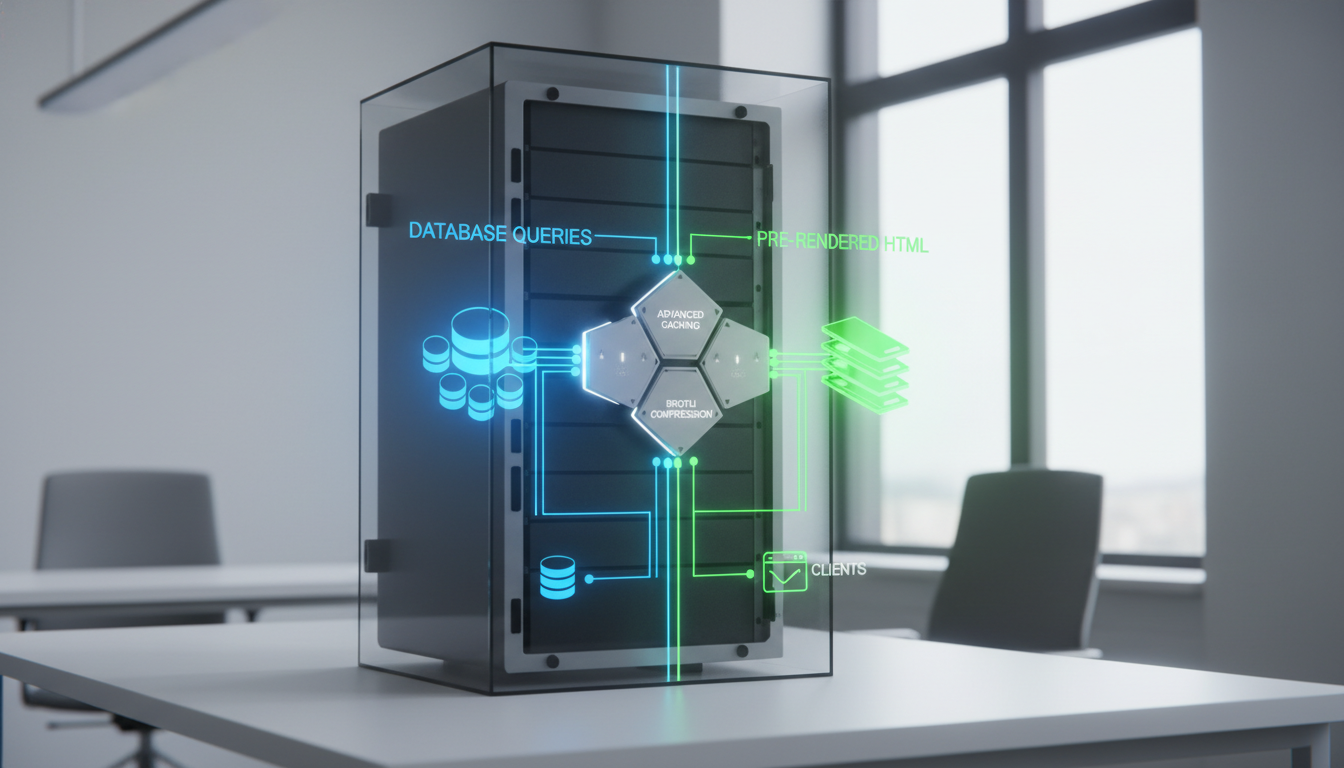

Deploy Server-Side Caching

When a user requests a dynamic page, the server typically executes PHP code, queries the database, stitches the HTML together, and sends it back. This takes time—time you do not have. Deploying advanced server-side caching solutions like Varnish acts as a hyper-fast middleman. Varnish sits in front of your web server, intercepts requests, and serves fully rendered, static HTML versions of your dynamic pages directly from random-access memory (RAM). This completely bypasses the arduous PHP execution and database querying process, delivering instant content to users and massively reducing the load on your server’s CPU.

Implement Object Caching

Even with full-page caching, dynamic elements like shopping carts, user dashboards, and real-time inventory checks must bypass the page cache to remain accurate. This is where your database can become a massive bottleneck. Implementing persistent object caching utilizing tools like Redis rescues your database from repetitive, agonizingly slow queries. Instead of forcing the database to calculate the same complex data points thousands of times a minute, Redis stores the results of these queries in memory. When the next user requests that data, the application pulls it instantly from the object cache, keeping dynamic, uncacheable pages blazing fast.

How to Turn Your Content Delivery Network Into a Weapon

Choose an Enterprise-Grade CDN

A basic, free-tier Content Delivery Network might offload some images, but it will not provide the global routing supremacy needed for top-tier SEO. You must select an enterprise-grade CDN like Cloudflare that matches your global traffic needs rather than settling for shared, congested edge nodes. Enterprise CDNs utilize Anycast routing protocols that intelligently funnel user requests to the closest geographic data center, bypassing internet traffic jams. This level of infrastructure ensures that a user in Tokyo accesses your site just as fast as a user in New York, fundamentally leveling the playing field for international SEO dominance.

Push Optimization to the Edge

The true power of a modern CDN lies not just in storing files, but in executing code at the “edge” of the network, as physically close to the user as possible. Pushing optimization to the edge allows you to manipulate request headers, resize images on the fly, and even serve cached HTML entirely from the CDN node. By leveraging edge computing functions, you bypass your origin server entirely for a vast majority of requests. This means your primary hosting server only handles the most complex, dynamic tasks, while the CDN effortlessly absorbs massive traffic spikes without a single microsecond of latency added to the user’s experience.

Balance Security and Latency

Security firewalls are mandatory, but poorly configured Web Application Firewalls (WAF) can introduce severe latency, forcing every request to run through a gauntlet of slow, regular expression checks. To wield your CDN effectively, you must balance security and latency by locking down protocols without adding unnecessary DNS lookup delays or routing overhead. This involves optimizing your TLS handshakes, enforcing strict SSL policies at the edge, and tuning your WAF rules to instantly block malicious bot traffic without delaying legitimate user requests. A fast site that is constantly bogged down by its own security checks will ultimately fail Google’s speed audits.

Fixing the Backend Because Cheap Hosting Ruins Good SEO

Slash Time to First Byte (TTFB)

Time to First Byte (TTFB) is the ultimate metric of server responsiveness; it measures the exact milliseconds it takes for a user’s browser to receive the very first piece of data from your server. If your TTFB is slow, every subsequent speed metric will inevitably be delayed. You cannot fake a fast TTFB with clever JavaScript. You must ditch shared hosting environments, where hundreds of websites cannibalize the same CPU and RAM, and upgrade to high-performance dedicated or highly optimized cloud solutions. You need raw processing power to calculate requests instantly, proving why cheap SEO is a trap and how much small businesses should actually pay for infrastructure that genuinely supports growth.

Audit Database Query Efficiency

A bloated, unoptimized CMS database acts like a ball and chain on your server’s processing speed. Every time a plugin adds a row to your database, or every time you save a new post revision, the database tables become fragmented. You must audit database query efficiency to clean up this digital hoarding. By running routine optimization scripts to clear out transient options, orphaned metadata, and spam comments, you ensure the database indices remain compact. A streamlined database answers queries in milliseconds, preventing your server from choking silently during complex dynamic loads.

Force Server-Level Compression

Transmitting raw HTML, CSS, and JS files over the network without compression is a gross mismanagement of bandwidth. While Gzip has been the industry standard for years, relying on it today leaves performance on the table. You must configure your Apache or Nginx servers to use Brotli compression. Brotli, an open-source data compression library, uses a modern dictionary-based algorithm that significantly outperforms Gzip, often resulting in file sizes that are 15% to 20% smaller. By forcing this advanced compression at the server level, you drastically reduce the sheer volume of data traveling through the network cables, yielding faster load times and happier search engine crawlers.

Playing Traffic Cop With Resource Prioritization Hints

Command the Browser With Directives

Browsers are smart, but they do not know the hierarchy of your specific business goals. You must take control and play traffic cop using resource prioritization hints. By injecting ``, `prefetch`, and `preload` directives into your HTML head, you force the browser to establish early connections to important third-party domains or to download critical assets before they are even discovered in the CSS. For a deep dive into the syntax, the Mozilla Developer Network documentation on preload explains how to command the browser to prioritize exact files. This ensures your hero banner or custom fonts are ready to render the exact millisecond they are needed.

Extract and Inline Critical CSS

When a browser downloads an external CSS file, it must read the entire document before it can paint anything to the screen. To eliminate this delay, you must extract your “critical CSS”—the absolute bare minimum styles required to render the above-the-fold content—and inline it directly into the HTML document’s `

`. By doing this, the browser parses the HTML and immediately has the styling rules needed to paint the visible screen instantly. The rest of the CSS is then loaded asynchronously in the background. This technique provides the illusion of instantaneous loading, drastically improving your First Contentful Paint (FCP) scores.Optimize Third-Party Font Loading

Custom web fonts are beautiful, but they are notorious for causing invisible text flashes or sudden layout shifts as they pop into existence. This ruins the user experience and destroys your Cumulative Layout Shift (CLS) scores. To optimize third-party font loading, you must utilize the `font-display: swap` property within your CSS face declarations. This instructs the browser to immediately display a fast-loading system fallback font while the custom font is downloading in the background. Once the custom font is ready, it swaps it seamlessly. Combined with preloading your most critical font files, this ensures your typography never stands in the way of your technical SEO.

Mobile-First Speed Hacks for the Smartphone Era

Exploit Progressive Web App (PWA) Features

Mobile search dominates global internet traffic, and traditional web technologies often struggle against the fluid performance of native mobile applications. To bridge this gap, you must exploit Progressive Web App (PWA) features. By installing service workers—powerful JavaScript files that run independently of the main browser thread—you can intercept network requests and deliver offline caching capabilities. Service workers can serve the foundational application shell instantly from the mobile device’s cache, providing app-like mobile performance speeds even when the user is driving through a tunnel with spotty cellular reception.

Audit Mobile-First Indexing Metrics

Google evaluates your website based on its mobile version; if your desktop site is blazing fast but your mobile site is a bloated disaster, your rankings will tank. You must explicitly audit mobile-first indexing metrics to identify and fix responsive design speed traps. This involves scrutinizing complex CSS grid layouts that cause high CPU rendering times on mid-tier mobile processors, and identifying massive DOM trees that cause mobile browsers to freeze during scrolling. Fixing these specifically penalizing factors ensures you align perfectly with Google’s mobile-first indexing algorithms.

Evaluate Accelerated Mobile Pages (AMP)

Years ago, Google pushed Accelerated Mobile Pages (AMP) as the ultimate solution for mobile speed. Today, the landscape is much more nuanced. You must critically assess if AMP frameworks still provide a competitive return on investment for your specific business niche. While AMP forces extreme performance by restricting custom JavaScript and utilizing Google’s edge cache, it also strips away vital branding control and lead generation functionality. Modern optimization techniques often allow standard mobile pages to rival AMP speeds, making it imperative to weigh the severe design restrictions of AMP against your actual conversion goals.

Stop Guessing and Start Auditing Like an SEO Professional

Track Real User Monitoring (RUM)

Relying exclusively on simulated lab tests like Google Lighthouse is a recipe for false confidence. Lab data simulates a specific device on a specific network connection, which rarely reflects the chaotic reality of your actual audience. To audit like a true professional, you must implement Real User Monitoring (RUM). By utilizing tools integrated with the Chrome User Experience Report (CrUX), you collect actual user field data from real people navigating your site across various devices and network conditions. Analyzing this raw, unvarnished data is the only way to pinpoint exactly where your site speed is failing in the real world.

Automate Performance Regression Testing

A website is never truly “finished.” Every marketing pixel, new feature rollout, or CMS update has the potential to silently destroy your meticulously crafted site speed. You must automate performance regression testing to defend your search rankings. By configuring advanced auditing tools like WebPageTest to run automated speed checks on your key landing pages every single night, you set up a digital tripwire. When a junior developer accidentally uploads an uncompressed 5MB hero video, automated alerts will notify you immediately, allowing you to catch speed-killing code updates long before Google’s crawlers notice and penalize your rankings.

Translate Speed Data into ROI

Technical SEO metrics mean absolutely nothing to a C-suite executive unless they are tied to revenue. The ultimate advanced technique is learning how to translate speed data into concrete business ROI. When you shave 800 milliseconds off your load time, you must correlate that directly to a drop in cart abandonment or a spike in lead form submissions. Understanding why your SEO metrics are lying to you about business growth helps you move beyond vanity scores. By proving that bounce rate reduction and conversion rate optimization are the direct, profitable results of your technical speed improvements, you secure the budget and authority to continue ruthlessly optimizing your infrastructure.

Frequently Asked Questions About Advanced Site Speed

How do Core Web Vitals directly impact my specific SEO rankings?

Google’s algorithm officially shifted from looking at basic, archaic speed metrics to prioritizing a set of user-centric experience signals known as Core Web Vitals. These vitals measure loading performance, visual stability, and interactivity. If your site consistently fails these thresholds, Google interprets the data as a poor user experience, applying a direct algorithmic penalty that suppresses your rankings in favor of faster, more stable competitors.

What is the real difference between browser, server, and object caching?

Browser caching forces the user’s personal device to save static files like images and CSS, making repeat visits instantly load locally. Server caching intercepts incoming requests and delivers a pre-built, static HTML snapshot to bypass slow PHP processing entirely. Object caching operates at the database level, memorizing the answers to complex, repetitive SQL queries so the database does not crash under the weight of dynamic user requests.

How can I identify and eliminate hidden render-blocking resources?

To unearth hidden bottlenecks, you must run your URLs through advanced waterfall charts in Chrome DevTools or WebPageTest. Look for JavaScript or CSS files that load sequentially before the “First Paint” vertical line. Once identified, you can eliminate these blockers by inlining critical styles, adding “defer” or “async” attributes to non-essential scripts, or dynamically injecting third-party tags only after the main thread finishes its vital work.

Are third-party scripts ruining my speed, and how do I fix them?

Yes, excessive third-party scripts for analytics, live chats, and advertising trackers are infamous for hijacking the main browser thread and devastating interactivity metrics. You fix them by strictly auditing their value, mercilessly deleting unused trackers, and implementing a tag manager to conditionally load these scripts. Delaying chat widgets until a user actively scrolls, or hosting analytics scripts locally, reclaims control over your site’s rendering pipeline.

Book a free consultation for your practice today.