Why Your Massive Website is Bleeding Crawl Budget and How to Stop It

Table of Contents

- The Uncomfortable Truth About Crawl Budgets

- Diagnosing the Drain With Google Search Console

- Plugging the Nastiest Crawl Budget Leaks

- Rolling Out the Red Carpet for Googlebot

- Technical Upgrades That Force Faster Crawling

- Advanced Tactics for Enterprise-Level Beasts

- Paranoia Pays Off With Ongoing Monitoring

- Frequently Asked Questions

Key Takeaways

- Googlebot operates on strictly finite resources, meaning unoptimized, bloated sites actively sabotage their own indexation and revenue potential.

- Infinite loops caused by faceted navigation and endless redirect chains are the silent killers draining your daily server allowance.

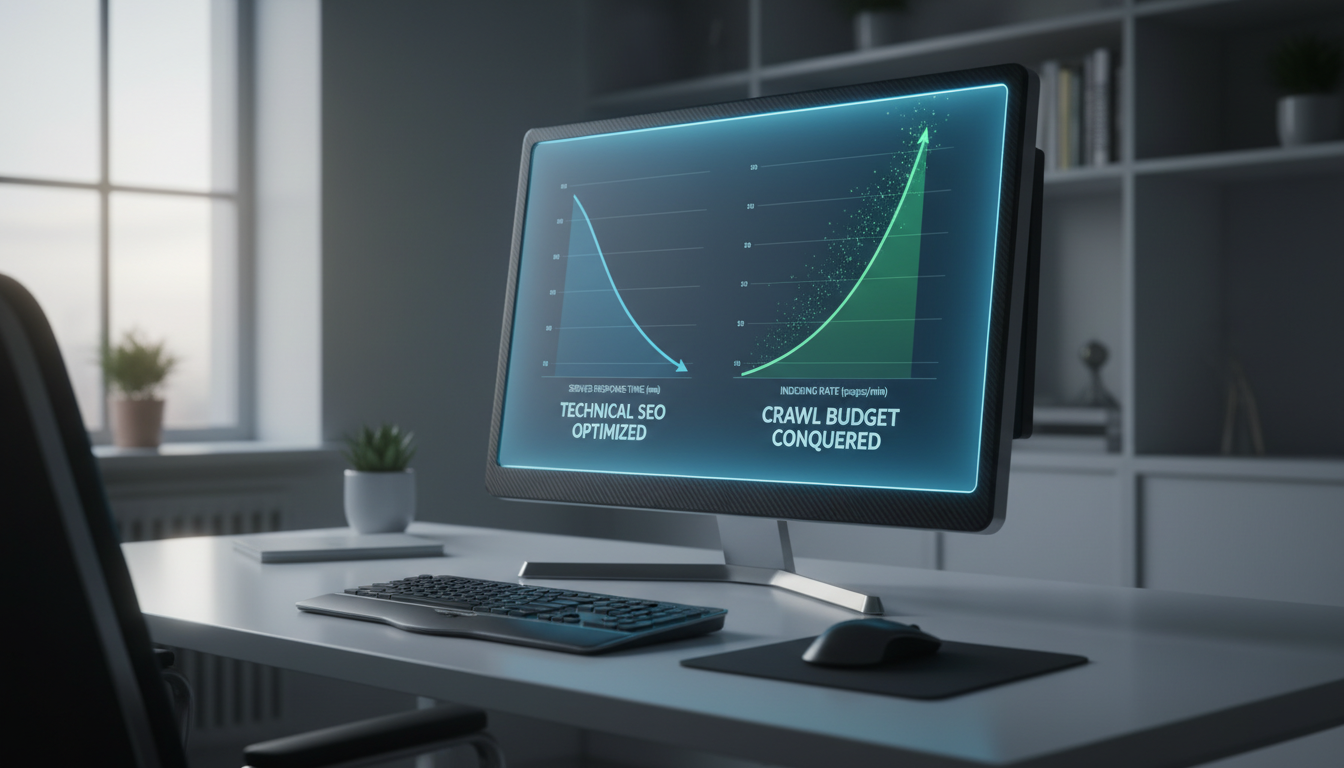

- Optimizing server response times and ruthless canonicalization practically force Google to process your most valuable pages faster.

- Analyzing raw server logs remains the only infallible, non-negotiable method for enterprise websites to expose exactly what search engines are doing behind the scenes.

Listen, you can pump out Pulitzer-worthy content all day, painstakingly optimize your on-page elements, and build authority until you are blue in the face. But if Googlebot physically cannot reach your pages because your website is an impenetrable labyrinth of digital dead ends, you are lighting your organic revenue on fire. For scaling small businesses and large e-commerce empires alike, a mismanaged crawl budget is not just a theoretical SEO annoyance. It is a direct bottleneck to your bottom line. When high-converting product pages or heavily researched guides sit in the dark unindexed, your competitors happily steal the traffic that rightfully belongs to you.

The SEO industry is saturated with self-proclaimed gurus dispensing terrible advice about crawl budget, often applying enterprise-level anxieties to twenty-page local business sites. We are going to strip away the fluff. If you are managing a massive digital footprint, every single server request matters. Understanding how to wrangle the Googlebot, plug the nasty leaks in your site architecture, and command search engines to prioritize your money-making pages is the ultimate technical SEO superpower. It is time to stop politely asking Google to notice your expanding website and start forcing the issue.

The Uncomfortable Truth About Crawl Budgets

What crawl budget actually is versus SEO industry myths

Most practitioners in the SEO space talk about crawl budget as if it were a mystical, arbitrary allowance handed down by the search gods. In reality, the concept is far more mechanical and ruthlessly pragmatic. Your crawl budget is simply the number of URLs Googlebot can and wants to crawl on your site within a given timeframe. It is not a penalty system or a hidden score you can gamify. It is a physical constraint born out of server limitations and algorithmic prioritization. The confusion often stems from failing to differentiate between the two distinct pillars of this concept: the crawl rate limit and the crawl demand.

The crawl rate limit represents the maximum number of simultaneous connections Googlebot can make to your server without bringing it to its knees. If your server is robust and responds with lightning speed, Googlebot raises this limit, allowing for deeper and more frequent crawling. Conversely, crawl demand is a measure of how badly Google actually wants to index your content. This demand is influenced by the popularity of your site, the staleness of your existing indexed pages, and overarching entity relevance. If you have a massive website with zero backlinks and terrible user engagement, your crawl demand will tank, rendering your theoretically high crawl rate limit completely useless. Understanding this distinction is the first step toward reclaiming your lost indexation.

To see this explained straight from the source, you can consult the official documentation over at Google Search Central, which provides the technical baseline for managing large sites. However, official guidelines often sanitize the brutal reality of how poorly structured websites actually behave in the wild. Real-world SEO requires you to look beyond the basic definitions and recognize that every unoptimized parameter URL is actively stealing attention away from your core revenue-generating pages.

The brutal math behind Googlebot’s crawling limits

Google is not running a charity. Operating a global network of web crawlers requires astronomical amounts of electricity, server space, and computational power. Every time Googlebot fetches a URL, renders the JavaScript, parses the HTML, and attempts to understand the context, it costs Alphabet money. Consequently, Google assigns crawl priority based on a brutal, algorithmic triage system. Websites that generate high search volume, possess massive PageRank, and frequently update their content are granted VIP access to Google’s resources. Sites that hoard duplicate content and throw server errors are relegated to the bottom of the queue.

The algorithmic math dictates that Googlebot will continuously adjust its behavior based on your site’s performance. If your server responds to a fetch request in 200 milliseconds, Googlebot quickly moves to the next URL. If it takes three seconds for your server to spit out a basic HTML document, Googlebot assumes your infrastructure is struggling and intentionally throttles its crawl rate to prevent an accidental Distributed Denial of Service (DDoS) attack. This self-regulating behavior means that a sluggish server literally forces Google to abandon your website before discovering your newest, most critical updates.

Furthermore, this prioritization is constantly recalculated. Google monitors the “staleness” of your URLs. A news publisher pumping out hourly updates trains Googlebot to camp on their homepage, waiting for fresh links. An e-commerce site that only updates its inventory once a quarter trains Googlebot to visit sporadically. If you suddenly add ten thousand new products to a historically stagnant website, Googlebot will not magically adjust its crawl budget overnight. You have to earn that increased capacity through consistently fast response times and clear signals of content popularity.

Why small sites shouldn’t panic but your growing empire must

If you run a local service business with a fifty-page WordPress site, you can stop reading now. Sites under ten thousand pages almost never face genuine crawl budget constraints unless they have accidentally triggered an infinite redirect loop or completely blocked their site via robots.txt. For small sites, indexation issues are almost always a symptom of poor content quality or a lack of internal linking, not a lack of Googlebot bandwidth. The panic that small business owners feel regarding crawl budget is largely manufactured by software companies trying to sell unnecessary technical audit tools.

However, if you manage a growing e-commerce empire, a massive programmatic SEO project, or a sprawling publisher site, crawl budget is your lifeblood. When you have hundreds of thousands of dynamic URLs, product filters, and user-generated pages, the sheer volume of data overwhelms standard crawling patterns. A single misconfiguration in your faceted navigation can spawn two million useless permutations in an afternoon. If Googlebot spends its daily allowance crawling these empty filter pages, your newly launched product lines will remain invisible to searchers.

This is why scaling businesses must pivot from passive content creation to aggressive technical defense. You are no longer just publishing pages; you are managing a massive digital ecosystem. Every architectural decision you make, from URL structures to internal linking hierarchies, directly impacts how efficiently search engines can digest your website. For large-scale operations, optimizing your crawl budget is the foundational prerequisite before any off-page or on-page SEO strategy can actually take effect.

Diagnosing the Drain With Google Search Console

Spotting the dreaded “Discovered – currently not indexed” status

There is no feeling quite as hollow for a technical SEO as opening Google Search Console and seeing a massive, hockey-stick spike in the “Discovered – currently not indexed” report. This specific status is the ultimate red flag that your website has hit a critical crawl limit. It means Google knows these URLs exist. It has found the internal links or processed the XML sitemap. It simply looked at the sheer volume of pages, checked its allotted time for your server, and decided it could not be bothered to actually fetch them.

When this status code balloons, it is rarely an issue of content quality. Instead, it is a glaring indicator that your server cannot handle the required load, or your site architecture is so bloated that Googlebot has given up halfway through the maze. You must treat this report as an emergency diagnostic dashboard. By isolating which directories or subfolders are trapped in this “Discovered” purgatory, you can reverse-engineer exactly where your crawl budget is being exhausted.

For example, if your new blog posts are ranking within hours, but your new product category pages are stuck in this status for weeks, you know the bottleneck is localized to your e-commerce architecture. Perhaps your product tags are generating thousands of thin pages that Googlebot is prioritizing over your actual inventory. Identifying the exact nature of the blocked URLs provides the roadmap for where you need to deploy noindex tags, robots.txt exclusions, or aggressive canonicalization.

Finding actionable data inside GSC crawl stats

Most site owners obsess over the Performance report in GSC, entirely ignoring the goldmine hidden under Settings within the Crawl Stats report. This dashboard provides a raw, unfiltered look at exactly how Googlebot interacts with your hosting infrastructure. By breaking down the “pages crawled per day” metric, you can establish a baseline of your site’s current crawl capacity. If you have two million pages but Googlebot is only crawling five thousand a day, it will take over a year just to refresh your existing index.

The most critical metric within this report is the “average response time.” This graph directly correlates with your crawl volume. You will almost always notice an inverse relationship: when server response times spike due to backend issues or traffic surges, the number of pages crawled plummets. This data removes the guesswork from technical SEO. If you see a massive drop in indexation, and the Crawl Stats report shows your average response time jumped from 300 milliseconds to 2.5 seconds, you do not need to rewrite your content. You need to call your hosting provider immediately.

Furthermore, the Crawl Stats report categorizes requests by purpose: refresh versus discovery. If Googlebot is spending ninety percent of its time refreshing existing pages and only ten percent discovering new ones, your site architecture is likely too flat, or your internal linking is failing to push authority to newly published content. Balancing these two purposes requires a strategic overhaul of your sitemaps and internal navigation menus to ensure fresh content receives the spotlight it deserves.

Identifying index bloat and slow content indexing

Index bloat is the creeping disease that slowly strangles a massive website’s crawl budget. It occurs when thousands of low-value, duplicate, or utterly useless pages are allowed to slip into Google’s index, eating up the crawl allowance that should be reserved for your money pages. You can identify this by comparing the number of valid URLs in your XML sitemap against the total number of pages indexed in Google Search Console. If your sitemap contains fifty thousand carefully curated URLs, but GSC shows two hundred thousand indexed pages, you have a massive bloat problem.

This bloat directly causes slow content indexing. When Googlebot visits your site, it wanders down endless rabbit holes of author archives, date-based directories, paginated comment sections, and thin tag pages. By the time it finishes parsing this garbage, its crawl session expires. Consequently, the incredibly valuable, high-intent landing page you just published gets pushed to the back of the queue. Your new content is effectively being held hostage by your legacy technical debt.

To diagnose this accurately, you must look for patterns in the URLs that are draining your budget. Are they dynamic search parameter URLs? Are they old promotional landing pages from five years ago? You can even run tests to see how quickly Google responds to changes on your site. Implementing 3 easy SEO tests you should run today can quickly reveal if your core pages are suffering from delayed indexation due to surrounding index bloat.

Using third-party site audit tools to validate errors

While Google Search Console is indispensable, it is notoriously slow to update and often obfuscates the deeper technical realities of your site structure. To truly validate your crawl budget errors, you must simulate the Googlebot experience yourself. Running a deep, comprehensive technical crawl using industry-standard software is the only way to uncover the hidden traps sabotaging your indexation.

Using an advanced crawler like Screaming Frog allows you to extract every single URL, redirect hop, and broken link exactly as a search engine encounters them. By setting the tool’s user-agent to Googlebot and configuring the crawl speed to mimic a search engine’s behavior, you can map out the actual architecture of your site. This process invariably reveals the nightmare scenarios that GSC hides: chains of five consecutive redirects, thousands of orphan pages with no internal links, and server-crushing JavaScript dependencies.

The true power of third-party audits lies in cross-referencing their data with GSC. When Screaming Frog identifies a cluster of ten thousand dynamically generated URLs that return a 200 OK status, and GSC confirms that Googlebot is actively crawling those exact URLs instead of your product pages, you have found the smoking gun. This irrefutable data gives you the ammunition needed to force your development team to implement proper URL parameter handling and consolidate the site architecture.

Plugging the Nastiest Crawl Budget Leaks

Murdering duplicate content and infinite faceted navigation loops

E-commerce platforms are notorious for generating infinite faceted navigation loops, arguably the most destructive crawl budget leak in modern SEO. When a user filters a product category by color, size, brand, and price, the CMS dynamically generates a unique URL for that specific combination. While this is fantastic for user experience, it is catastrophic for search engines. The combination of “Red Shoes, Size 10, Nike” and “Nike Shoes, Red, Size 10” often produce two different URLs with the exact same content, tricking Googlebot into crawling an endless, spiraling matrix of duplicates.

To murder these infinite loops, you must be draconian with your parameter handling. You cannot rely on canonical tags alone to fix faceted navigation, as Google still has to crawl the duplicate parameter URL to read the canonical tag in the first place, entirely defeating the purpose of saving crawl budget. Instead, you must systematically block search engines from accessing certain parameter combinations altogether.

This requires a coordinated effort between your robots.txt file and your internal linking strategy. By using “nofollow” attributes on your minor sorting filters—like “sort by price” or “sort by newest”—and disallowing those specific query strings in your robots.txt, you effectively sever the infinite loop. This forces Googlebot to stay on the main, optimized category pages rather than wandering endlessly through your database permutations.

Exterminating soft 404s and endless redirect chains

A soft 404 is a technical insult to Googlebot. It occurs when a page is completely dead, empty, or missing its core content, but the server still stubbornly returns a 200 OK success code. This forces Google to download the page, render it, and analyze the text, only to algorithmically deduce that the page is actually useless. This wastes an immense amount of computational power. You must ruthlessly exterminate soft 404s by ensuring your server returns a hard 404 (Not Found) or 410 (Gone) status code the instant a page is legitimately removed from your site.

Endless redirect chains are equally offensive to your crawl budget. When Googlebot hits URL A, which redirects to URL B, which redirects to URL C, it rapidly consumes its allotted crawl time for your server. After three or four hops, Googlebot will simply abandon the chain entirely, assuming the server is broken. This means the final destination URL—the one you actually want indexed—never gets crawled.

The solution is tedious but mandatory. You must extract your entire redirect mapping and replace every single chain with a direct, single-hop redirect from the original legacy URL straight to the final destination URL. Bypassing the intermediate hops reclaims a massive amount of crawl efficiency and ensures that link equity flows directly to your most important pages without being diluted by server latency.

Evicting thin, spammy, and low-quality pages

There is a pervasive, hoarding mentality among older webmasters who believe that every page ever published must be preserved for the sake of overall site mass. This is a fatal error. Hoarding low-value, thin, or spammy pages actively harms your high-value content by dragging down your site’s overall quality score and diluting your crawl demand. If Googlebot consistently encounters pages with fifty words of outdated text or auto-generated user profiles, it will eventually assume the entire domain is low quality and drastically reduce its visit frequency.

You must become a ruthless landlord and evict the dead weight. Conduct a massive content pruning exercise to identify pages that have generated zero organic traffic and zero backlinks over the last two years. If these pages do not serve a critical business function or compliance requirement, they need to go. Do not redirect them to the homepage, as that creates a confusing user experience and a soft 404 signal.

Instead, serve a definitive 410 Gone status code for these purged URLs. A 410 code explicitly tells Googlebot that the page has been permanently intentionally deleted and will never return, prompting the crawler to strip it from the index immediately and stop requesting it in the future. Removing this dead weight acts like a pressure release valve, instantly freeing up bandwidth for Googlebot to finally focus on the content that actually drives revenue.

Rolling Out the Red Carpet for Googlebot

Weaponizing your robots.txt to block useless crawler traps

Your robots.txt file is the frontline bouncer of your digital nightclub. It is the absolute first file Googlebot requests before accessing anything else on your domain. Weaponizing this file means aggressively and proactively disallowing access to directories, scripts, and URL parameters that offer zero SEO value. If you have an internal site search function that generates unique query URLs, you must block the search parameter immediately. Otherwise, competitors or malicious bots can spam your internal search, generating millions of dynamic pages that Googlebot will waste its life trying to index.

You must strategically disallow backend directories, user account login pages, and shopping cart URLs. There is absolutely no reason for a search engine to crawl your checkout process. However, this weaponization requires precision. A single misplaced asterisk or backslash can accidentally block your entire website from the internet.

Furthermore, you must be hyper-vigilant about not blocking the critical JavaScript or CSS assets that Googlebot needs to render your site. If you disallow the folders housing your visual styling or core functional scripts, Googlebot will see your site as a broken, text-only disaster from 1998. The goal is to corral the crawler toward your money pages, not blindfold it entirely.

Slicing giant XML sitemaps to force Google’s attention

XML sitemaps are intended to be a pristine, highly prioritized roadmap for search engines. Yet, many enterprise sites dump fifty thousand URLs into a single, massive sitemap file and hope for the best. While Google technically allows up to fifty thousand URLs per sitemap, providing a massive, monolithic file makes it incredibly difficult to isolate indexation issues. When you have a giant sitemap, finding out why ten specific pages are not indexed is like finding a needle in a digital haystack.

To force Google’s attention and maintain absolute control over your indexation, you must slice massive sitemaps into smaller, logically categorized index files. Break them down by subfolder, product category, or publication month. Create a dedicated sitemap strictly for your blog posts, another for your category pages, and another for your product inventory. By segmenting your sitemaps to contain only a few thousand URLs each, you can pinpoint exactly which site sections are suffering from poor indexation rates inside Google Search Console.

Crucially, these sitemaps must remain impeccably clean. They should only contain canonical URLs that return a 200 OK status code. If your sitemap is littered with 301 redirects, 404 errors, or non-canonicalized parameter URLs, Googlebot will quickly lose trust in the file and start ignoring it entirely. A pristine, categorized sitemap acts as a VIP fast-pass, ensuring Googlebot goes exactly where you want it to go without hesitation.

Using ruthless canonicalization to merge duplicate signals

Canonical tags are the ultimate diplomatic tool in technical SEO, allowing you to peacefully resolve duplicate content disputes without resorting to heavy-handed redirects or server blocks. When you have multiple URLs serving the same purpose—like tracking IDs for marketing campaigns or minor product variations—proper canonicalization explicitly tells Google which version is the master copy. This consolidates the indexing signals and link equity into a single, powerful URL.

However, you must be ruthless and exact in your application. Cross-domain and self-referencing canonicals must be implemented flawlessly across your entire database. If URL A points to URL B as the canonical, but URL B points back to URL A, you create a canonical loop that paralyzes the crawler. Furthermore, if the content on the canonicalized page is entirely different from the master page, Google will completely ignore your canonical tag and index both pages anyway, doubling your crawl budget waste.

This consolidation is specifically crucial for tracking parameters. If you run extensive email marketing or social media campaigns, UTM parameters can generate hundreds of duplicate URLs. By ensuring every single page on your site has a strict, self-referencing canonical tag that strips out these UTMs, you guarantee that all the external traffic and social signals funnel their equity directly into your clean, optimized root URLs.

Building internal link structures that funnel authority

Googlebot does not navigate your site by magic; it follows links. If your website architecture resembles a deep, convoluted silo where a user has to click ten times to reach a product page from the homepage, Googlebot will never reach that product. Deeply buried pages suffer from extreme crawl starvation. To combat this, you must build flat internal link architectures that force authority down into your deepest money pages with as few hops as possible.

This is achieved through aggressive, strategic mega-menus, comprehensive footer navigation, and dynamic related-product modules. A flat architecture ensures that even a site with one million pages requires no more than three or four clicks to reach any given URL. This significantly reduces the time and energy Googlebot must expend to discover your entire database.

Moreover, you must prioritize internal links to your high-revenue, high-converting pages. If a specific product category is driving seventy percent of your business, it should receive the vast majority of your internal homepage links. Funneling authority in this manner explicitly signals to Googlebot that these specific pages are the most important assets on your domain, effectively commanding the search engine to crawl them more frequently than your obscure, lower-tier content.

Technical Upgrades That Force Faster Crawling

Trimming server response times to keep Googlebot moving

We have established that server speed dictates crawl capacity, but understanding how to physically trim those response times is where the real technical battle is won. Time to First Byte (TTFB) is the absolute most critical metric for crawl budget optimization. If your server takes over a second just to acknowledge a request, Googlebot will throttle its crawl rate aggressively. Upgrading your server hardware, transitioning from shared hosting to a dedicated server or VPS, and implementing aggressive caching layers are non-negotiable requirements for enterprise sites.

Optimizing server response requires deep backend auditing. You must look at how your CMS queries your database. Heavy, unoptimized database queries are the primary culprit behind sluggish TTFB. By implementing object caching like Redis or Memcached, you can store frequently accessed database queries in RAM, allowing your server to bypass the database entirely and serve HTML documents to Googlebot in a matter of milliseconds. This rapid-fire response effectively tricks Google into expanding your crawl rate limit.

If you are serious about diagnosing these foundational backend delays, you must look into how to fix the technical SEO issues secretly sabotaging your site speed. Upgrading your infrastructure directly translates into higher crawl caps, faster indexation, and ultimately, a more dominant presence in the search engine results pages.

Slashing blocking JavaScript and CSS

The modern web’s reliance on heavy JavaScript frameworks like React, Angular, and Vue has created a secondary, often hidden crawl budget crisis. Googlebot employs a two-wave indexing process. In the first wave, it crawls the raw HTML. If your site relies on client-side JavaScript to render its core content or navigation links, Googlebot must defer that rendering to a second wave, which requires significantly more computational resources. This heavy client-side rendering practically paralyzes search engine crawlers, burning through your crawl budget at an alarming rate.

To slash this blocking friction, massive websites must implement Server-Side Rendering (SSR) or dynamic rendering. SSR executes the JavaScript on your server before it ever reaches the browser or the search engine bot, delivering a fully formed, pristine HTML document. This eliminates the need for Googlebot to execute complex scripts, drastically reducing the required render time and saving massive amounts of crawl budget per page.

Furthermore, you must defer or inline your critical CSS. If Googlebot has to download five different massive stylesheet files before it can understand the visual hierarchy of your content, you are introducing unnecessary latency. Minifying your code, stripping out unused CSS, and delivering lightweight, asynchronous scripts ensures that when Googlebot requests a page, it receives the data instantly without being choked by bloated design assets.

Offloading heavy lifting to a CDN

For enterprise sites serving a global audience, the physical distance between your origin server and Google’s crawling infrastructure introduces unavoidable network latency. If your single origin server is in London, but Googlebot is crawling from California, the sheer physical distance of data transmission eats into your crawl time. Offloading this heavy lifting to a Content Delivery Network (CDN) like Cloudflare completely neutralizes this geographical disadvantage.

A CDN operates a massive, globally distributed edge network of servers. When you route your traffic through a CDN, copies of your website’s static assets—like images, CSS, and HTML files—are cached on servers physically located just miles away from Google’s crawl centers. This means that when Googlebot requests a URL, it is served almost instantaneously from a local edge server rather than forcing a cross-continental database query.

By absorbing the brunt of this traffic, a CDN significantly reduces the load on your origin server. This ensures that your core server resources remain entirely dedicated to processing dynamic requests and handling complex database queries. The result is a dramatically faster global content delivery architecture that directly inflates your overall crawl efficiency, allowing search engines to index thousands of pages with zero friction.

Advanced Tactics for Enterprise-Level Beasts

Analyzing raw server logs to expose Googlebot’s behavior

For truly massive, enterprise-level beasts, Google Search Console and third-party crawlers are not enough. They provide summaries, simulations, and heavily filtered data. The only infallible, indisputable source of truth regarding crawl budget is your raw server log file. Analyzing server logs allows you to see the exact timestamp, status code, user-agent, and IP address of every single request made to your server. It strips away the SEO theory and exposes the cold, hard reality of exactly what Googlebot is doing on your domain.

Log file analysis reveals the hidden crawl traps that GSC entirely ignores. It shows you if malicious bots are spoofing the Googlebot user-agent to scrape your data, artificially driving up your server load. It uncovers hidden directories and forgotten legacy subdomains that Google is inexplicably crawling tens of thousands of times a day. By parsing access logs, you can generate exact hit counts for every URL, providing irrefutable evidence of which sections of your site are suffering from crawl starvation.

Cross-referencing this log data with your GSC crawl stats and Screaming Frog audits creates the ultimate technical SEO trifecta. When you can prove to your engineering team that Googlebot wasted thirty percent of its daily bandwidth hitting a deprecated API endpoint because you hold the raw access logs in your hand, you cut through the corporate bureaucracy. Server logs turn technical SEO arguments into undeniable mathematical facts.

Taming international hreflang tags

Expanding an e-commerce empire into multiple countries introduces an exponential complexity to your crawl budget. Implementing international hreflang tags is necessary to serve localized content to different regions, but it fundamentally multiplies your URL count. If you have ten thousand products available in five different languages and three different currencies, you suddenly have a sprawling matrix of one hundred and fifty thousand URLs. If your hreflang architecture is improperly configured, this expansion will obliterate your crawl capacity instantly.

Improper multi-region setups, such as conflicting hreflang tags or tags pointing to broken URLs, force Googlebot to cross-reference every single language permutation manually to resolve the errors. This creates an unmanageable spiderweb of validation requests. You must tame this beast by ensuring absolute symmetry in your localized linking. Every hreflang tag must point to a 200 OK canonical URL, and the return tags from the alternate language pages must perfectly match the origin.

Furthermore, you must implement strict technical safeguards. If you auto-translate pages that receive zero traffic or generate localized pages for countries where you do not actually have inventory, you are actively burning your crawl budget. You must use IP-based dynamic serving carefully, preferring distinct URL structures (like subdirectories for each language) combined with pristine hreflang logic to ensure Googlebot can index your global footprint without suffocating your main server.

Prioritizing pages during massive content migrations

There is no scenario more dangerous to a massive website’s crawl budget than a full-scale redesign or domain migration. When you change your site architecture, transition to a new CMS, or restructure your URL taxonomy, you are forcing Googlebot to re-evaluate and re-crawl your entire digital existence from scratch. If you dump hundreds of thousands of new URLs and 301 redirects onto the server all at once, you will instantly crash your crawl rate limit, plunging your organic traffic into an abyss.

To survive this, you must prioritize your pages and execute the migration in controlled, phased batches. Never flip the switch on a massive enterprise site all at once. Begin by migrating your highest-revenue, top-tier categories and their corresponding redirects. Monitor your server logs and GSC crawl stats to ensure Googlebot successfully processes the new architecture. Once the crawl rate stabilizes, release the next batch of URLs.

Failing to control this process is the exact reason why your e-commerce redesign is an SEO time bomb and how to defuse it. A phased migration framework ensures your server remains responsive, your most critical money pages maintain their indexation, and Googlebot is never overwhelmed by a tsunami of newly generated technical debt.

Paranoia Pays Off With Ongoing Monitoring

Setting up automated alerts for sudden crawl drops

Crawl budget optimization is not a project you finish; it is a permanent operational state. Because massive websites are dynamic ecosystems with constant code deployments, inventory updates, and marketing parameter creations, new crawl traps are inevitably spawned every week. Relying on manual checks to catch these issues guarantees you will be too late. You must cultivate a healthy level of paranoia by setting up automated alerts for sudden crawl drops or traffic anomalies.

Using advanced analytics platforms or custom Python scripts connected to the GSC API, you can establish trigger warnings. If your average server response time spikes by more than twenty percent, or if your “Discovered – currently not indexed” count jumps by five thousand URLs overnight, your system should instantly ping your Slack channel or email inbox. Catching a crawl rate collapse within hours rather than weeks is the difference between a minor technical hiccup and a catastrophic revenue loss.

These custom alerts ensure that your SEO team acts as a proactive defense mechanism rather than a reactive cleanup crew. By immediately investigating any statistical anomaly in crawler behavior, you can isolate the rogue plugin, the broken database query, or the flawed CMS update that caused the issue, neutralizing the threat before Google algorithmically demotes your entire domain.

Scheduling ruthless technical SEO audits

Automation is critical, but it cannot replace the necessity of scheduled, ruthless technical deep-dives. Growing websites naturally accumulate structural bloat. Marketing teams create temporary landing pages that are never deleted, developers deploy quick-fix scripts that slow down the server, and content teams publish thousands of tags that create duplicate architectures. To combat this entropy, you must mandate monthly or quarterly technical SEO audits.

These audits must be uncompromising. You re-crawl the entire massive domain using Screaming Frog, you pull the latest gigabytes of raw server logs, and you cross-reference everything against the current sitemap matrix. You are actively hunting for newly generated index bloat, creeping redirect chains, and any sign of parameter-based infinite loops.

This continuous purging process ensures that your site architecture remains lean, fast, and entirely optimized for search engine bots. It is a necessary administrative overhead that directly protects your organic visibility. If you let these audits slide, the technical debt will silently compound until your site is once again paralyzed by crawl budget exhaustion.

Staying ahead of Google’s crawling algorithm changes

Finally, true technical SEO mastery requires acknowledging that the rules of the game are constantly evolving. Google continually adjusts how its rendering engine processes JavaScript, how it values dynamic content, and how its data centers allocate resources globally. What worked flawlessly to optimize crawl budget two years ago might now be considered an outdated, inefficient practice that Google algorithms actively ignore.

You must stay aggressively informed by monitoring Google Search Central documentation updates, algorithmic change logs, and patent filings related to information retrieval systems. When Google announces a shift toward prioritizing Core Web Vitals or alters how it handles specific HTTP status codes, your enterprise architecture must pivot immediately.

By treating your massive website as a living technical entity that must adapt to the search engine’s evolving infrastructure, you future-proof your indexation. Staying ahead of these crawling algorithm changes guarantees that no matter how large your digital footprint grows, Googlebot will always treat your money pages like absolute royalty.

Frequently Asked Questions

Does page speed actually impact my website’s crawl budget?

Yes, absolutely. Page speed is directly tied to server response time, which is the foundational metric determining your crawl rate limit. Faster page loads mean your server processes requests efficiently, allowing Googlebot to crawl significantly more pages within its allotted time frame. Furthermore, excellent Core Web Vitals heavily influence your overarching site quality scores, which in turn elevates your overall crawl demand, creating a compounding positive effect on your indexation.

How long does it take Google to adjust crawl limits?

Changing crawl limits is not an overnight process. Because Google calculates crawl capacity based on historical server reliability and long-term content quality, changes to server hardware or massive technical cleanups take weeks, sometimes months, to fully register in the algorithm. You must be patient and avoid expecting instantaneous miracles after fixing technical issues; the search engine needs sustained proof that your site architecture is permanently stabilized before it raises your bandwidth allowance.

Can I just buy better hosting to solve crawl issues?

Buying better hosting is a critical step, but it is only half the solution. Upgrading to a dedicated server or premium cloud infrastructure will absolutely fix artificial crawl rate limits caused by slow response times, but it does absolutely nothing to fix low crawl demand. If your site architecture is still generating infinite faceted navigation loops and your content is thin, Googlebot will simply crawl your garbage pages faster. You must pair superior hosting with ruthless architectural optimization.

What is the exact difference between crawl rate and crawl demand?

Crawl rate refers specifically to the mechanical limit of how many requests Googlebot can physically make to your server without crashing it; it is a measure of your hosting capacity and technical speed. Crawl demand, on the other hand, is an algorithmic measure of how much Google actually wants to crawl your site based on your content’s popularity, backlink profile, and frequency of updates. You need a high crawl rate to facilitate a high crawl demand.

Book a free consultation for your practice today.